C# 多线程

Foreground thread vs Background thread

Background threads do not keep the application running, even if they are still executing. However, as long as a foreground thread runs, the application will not close.

Console.WriteLine(thread1.IsBackground);

thread1.IsBackground = true;Staring a new thread

Thread thread = new Thread(() => PrintPluses(30));

thread1.Start();ThreadPool

Creating and starting new threads is a pretty costly operation from the performance point of view. So instead of creating new threads all the time, it would be better to have a pool of threads. When a new work item needs to be scheduled on a thread, we will look into this pool and look for a thread that is not currently used. Then we will schedule this work item to run on this thread. Once the work is finished, the thread will be returned to the pool, ready to be reused. This way, the cost of creating those threads will only be paid once, and after that each thread will be reused many times.

ThreadPool.QueueUserWorkItem(PrintA);TPL (Task Parallel Library)

Nowadays, we use higher-level mechanisms to implement multighreading and asynchrony in our apps. The main tool we will focus on is TPL. The core component of the TPL is the Task class.

Task

A task represents a unit of work that can be executed asynchronously on a seperate thread(记住,这会是backgroundi线程). It resembles a thread work item but at a higher level of abstraction. It allows for more efficient and more scalable use of system resources. The TPL queues tasks to the ThreadPool. It is a relatively lightweight object, and we can create many of them to enable fine-grained parallelism.

Another benefit of using tasks over plain threads is that tasks and the framework built around them, provide a rich set of methods that support waiting for a task to be finished, canceling a task that is running, executing some code after the task is completed, handling exceptions thrown in the code executed within a task, and more. All these things are pretty hard to achieve using only plain old Thread class.

Task task = new Task(() => PrintPluses(200));

task.Start();

// OR

Task.Run(() => PrintPluses(200));

// Underlying they all leverage ThreadPoolAfter the task is started, the main thread continues its work, so the code executed within the task may run in parallel with the code from the main thread and with other tasks.

Task<int> taskWithIntResult = Task.Run(() => CalculateLength("Hi"));

// taskWithIntResult will represent a task that can produce an int,

// but it is not an int yet. Only once this task execution is completed

// can the int result be extracted from this task object

Console.WriteLine("Length is: " + taskWithIntResult.Result);

// We can use the Result property to retrive it. But the problem is,

// accessing the Result property is a blocking operation, which means,

// when we use it, the main thread is stopped until task is finished.

var task = Task.Run(() => {

Thread.sleep(1000);

Console.WriteLine("Task is finished");

});

task.Wait();

// When using tasks returning a value, we could use the Result property

// But for non-generic task, it will not carry any result, so instead,

// use the Wait method, which means, wait here until this task is

// completed and only move on to the next line after that

// we can use Wait method for any task, not only non-generic, if we use

// it on a task producing a result, it will stop the current thread

// until the result is ready.

var task1 = Task.Run(() => {...});

var task2 = Task.Run(() => {...});

Task.WaitAll(task1, task2);

// Wait for multiple tasks to be finished.Task Continuation

// A continuation is a function that will be executed after a task is

// completed. We can define a continuation using ContinueWith method

Task taskContinuation =

Task.Run(() => CalculateLength("Hello there"))

.ContinueWith(taskWithResult =>

Console.WriteLine("Length is " + taskWithResult.Result))

.ContinueWith(completedTask => Console.WriteLine("Final"));

// Schedule a continuation for multiple tasks

var tasks = new[]

{

Task.Run(() => {CalculateLength("Hello")}),

Task.Run(() => {CalculateLength("Hi")}),

Task.Run(() => {CalculateLength("Hola")}),

}

var continuationTask = Task.Factory.ContinueWhenAll(

tasks,

completedTasks => Console.WriteLine(string.Join(", ", completedTasks.Select(task => task.Result))));

// tasks must be an array, sorry about that

// second parameter is a lambda which takes the same collection of tasks

// but when this lambda is triggered, those tasks will already be

// completedTask Cancellation

TPL utilizes the concept of cooperative cancellation. It means that the code that requests the cancellation and the code executed within the canceled task must both cooperate to cancel this task.

A cancellation token is an object shared by the code that requests the cancellation and the canceled task. The class that can provide us with such a token is called CancellationTokenSource.

var cancellationTokenSource = new CancellationTokenSource();

var task = Task.Run(() => NeverendingMethod(cancellationTokenSource), cancellationTokenSource.Token);

string userInput;

do

{

Console.WriteLine("Type 'stop' to end the neverending method:");

userInput = Console.ReadLine();

} while (userInput?.ToLower() != "stop");

cancellationTokenSource.Cancel();

Console.WriteLine("Program is finishing...");

Console.ReadKey();

static void NeverendingMethod(CancellationTokenSource cancellationTokenSource)

{

while (true)

{

if (cancellationTokenSource.IsCancellationRequested)

{

throw new OperationCanceledException(cancellationTokenSource.Token);

// otherwise the task will be ending in RanToCompletion instead of cancelled

}

// 除了上面这种if语句的写法,我们还可以写cancellationTokenSource.Token.ThrowIfCancellationRequested(),效果是一样的。If the cancellation is requested, the OperationCancelledException will be thrown

Console.WriteLine("This method will never end!");

Thread.Sleep(1000); // Sleep for 1 second to avoid flooding the console

}

}So why we need to pass cancellationTokenSource.Token to Task.Run method, and seems it has no usage at all? Well, it may sometimes happen that the task cancellation is requested before the scheduler even starts the task execution. If we don’t pass the cancellation token here, the task will start executing anyway, even if it was requested to be cancelled. It is simply a waste of resources if it was requested to be cancelled. It’s better not to bother with starting it. So generally it is recommended to always pass this token to the method responsible for triggering the task.

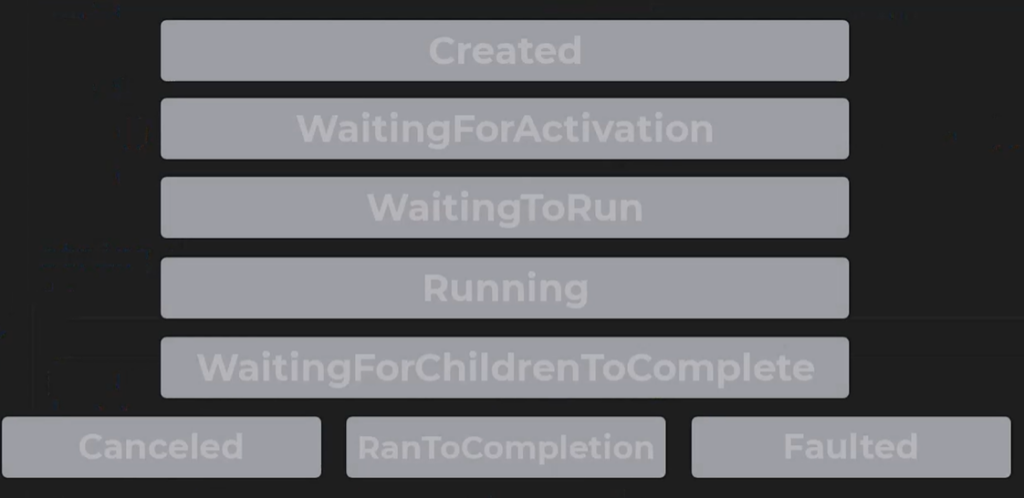

Task Lifecycle

- Created: It will not be updated until the task is scheduled.

- WaitingForActivation: Starting status for tasks created through methods like

ContinueWith. It means the task waits to be scheduled after some other operation is completed. - WaitingToRun: Once a task is scheduled, whether because it’s a continuation of an operation that is already finished, or simply because the Start method has been called on it, its status changes to

WatingToRun. The task is ready to be started, and it’s waiting for the scheduler to pick it up and run it. - Running: 没啥好解释的。

- WaittingForChildrenToComplete: A child’s task or nested task is a task that is created in the code executed by another task, which is known as the parent task. If a task has child tasks, it may need to wait till all of them are completed and this is when its status is WaitingForChildrenToComplete.

- RanToCompletion: If everything goes well, the task life cycle ends here.

- Canceled: If the task has been cancelled before it can be completed, task life cycle ends here.

- Faulted: If there was some error during the task execution, task life cycle ends here.

Task<int> taskFromResult = Task.FromResult(10);

// Sometimes we create a task from a result like this.

// Such a task has no code to execute, and from the very moment we create

// it, its status is already set to RantoCompletion and it carries the

// result we gave it.It's useful for unit testing.Exception Thrown by Other Threads

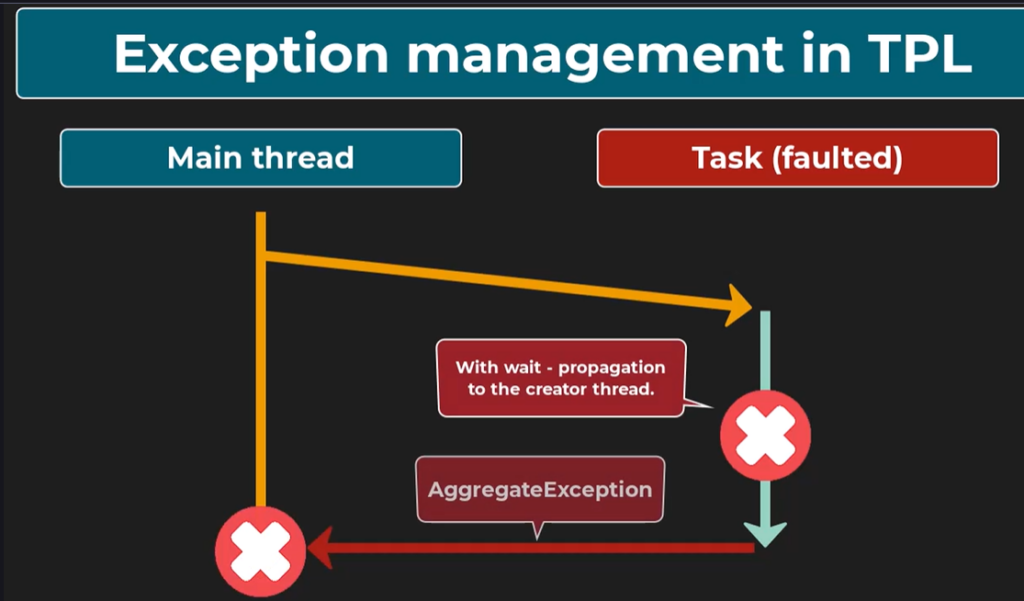

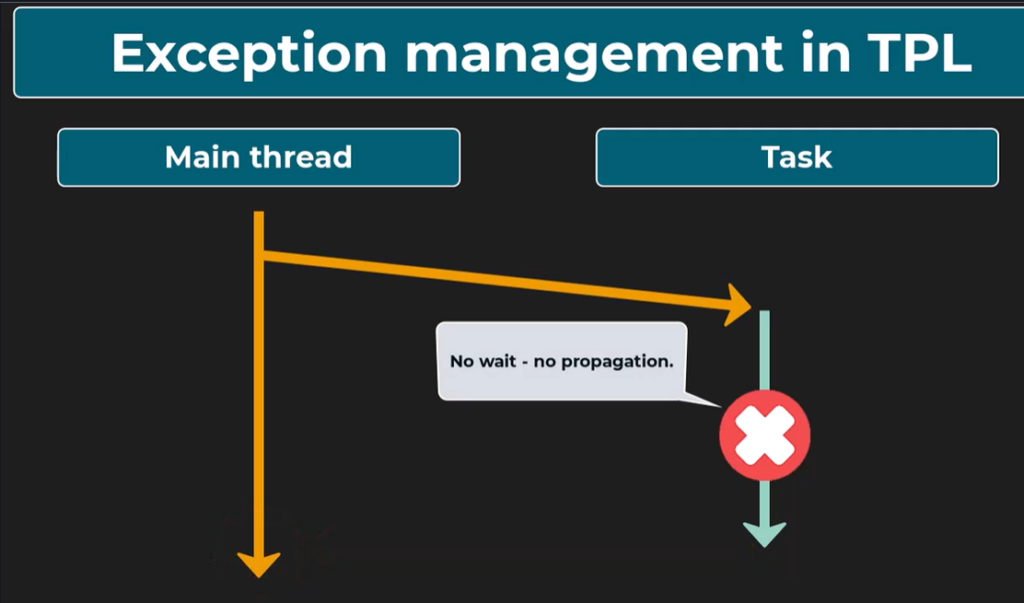

Exceptions thrown on one thread can by default, only be caught on the same thread. Exceptions thrown on other threads will simply remain unhandled, and they will crash our application. Using tasks, not plain threads, we have much more control over exceptions.

Suppose an exception is thrown within the code executed by a task. In that case, it will only be propagated to the thread that created this task if we await the task completion, for example, by calling the Wait method or by using the Result property. If we wait for the task completion, the exception thrown within this task code will be wrapped in an AggregateException. This is because more than one exception can be thrown within a task. Also, we can await multiple tasks with one command, and all those exceptions will be aggregated within this AggregateException.

If we don’t wait for the task completion, the exception will not reach the thread that started the task. We will only be able to see that it happend by checking if the task statks is Faulted, and that the Exception property carries exceptions.

Then how to handle the exception asynchronously?

var task = Task.Run(() => MethodThrowingException())

.ContinueWith(

faultedTask => Console.WriteLine("Exception Caught: " + faultedTask.Exception.Message),

TaskContinuationOptions.OnlyOnFaulted);

// This crucial argument decides that this continuation will only be

// triggered if a task ends up in a faulted state.How to handle exceptions carried within AggregateException?

var task = Task.Run(() => Divide(2, null))

.ContinueWith(

faultedTask => {

faultedTask.Exception.Handle(ex =>

{

Console.WriteLine("Division task finished");

// handle the exception and return true, if it can't handle, return false

// the current lambda will be applied to all excetions carried by AggregateException

// if all of them are successfully handled, no new exception will be thrown and program will continue undisturbed

// The continuation task will finish successfully and its status will be RanToCompletion

// However, all those exceptions for whom false will be returned will be wrapped in another AggregateException

// and thrown from the Handle method. In other words, if for any exception this lambda will return false

// the Handle method will thrown a new AggreateException, the status of the continuation task will be Faulted

// 比如一共3个exception, 这个方法只handle了两个,那么它会再次抛出AggregatedException,里面就只剩那一个没被handle住的异常了

if (ex is ArgumentNullException)

{

Console.WriteLine("Arguments can't be null");

return true;

}

if (ex is DivideByZeroException)

{

Console.WriteLine("Can't divide by zero.");

return true;

}

Console.WriteLine("Unexpected exception type");

return false;

});

},

TaskContinuationOptions.OnlyOnFaulted),

Thread.Sleep(1000);

Console.WriteLine("Program is finished");

Console.ReadKey();

static float Divide(int? a, int? b)

{

if (a is null || b is null)

{

throw new ArgumentNullException("Both parameters must have a value.");

}

if (b == 0)

{

throw new DivideByZeroException("The second parameter cannot be zero.");

}

return a.Value / (float)b.Value;

}Thread Safety

Thread safety is the property of a program that ensures multiple threads can correctly and safely execute it without causing unexpected behavior.

Atomic Operation

An atomic operation is an operation that is indivisible. In other words, it is always performed in one go without a chance of being interrupted. It is composed of a single, very basic step. For example, assigning one to the x variable.

On the other hand, let’s consider the operation of incrementing x by 1. It is not atomic because under the hood 2 steps need to happen: 1. the value of x+1 is calculated and assigned to a temp variable; 2. this temp variable is assigned to x.

Race Condition

A situation in concurrent programming where the outcome of a program depends on the timing of events. It occurs when multiple threads access shared data or resources, and the final outcome is dependent on the order of execution.

Lock

The critical section is the segment of code where multiple threads or processes access shared resources, such as common variabvles and files, and perform write operations on them. We can limit the access to the critical section to 1 thread at a time by using a lock.

class Counter

{

private object _lock = new object();

public int Value { get; private set; }

public void Increment()

{

lock (_lock)

{

Value++;

}

}

public void Decrement()

{

lock (_lock)

{

Value--;

}

}

}Because locks prevent multiple threads from performing some work simultaneously, they limit the performance benefit we take from multithreading. That’s why it is important to only use lock statements where it is really needed.